Intelligence Crossed the Line. The World Kept Arguing.

The most important revolutions don't start with a bang. They start with everyone agreeing that nothing fundamental has changed, right up until it becomes impossible to pretend otherwise. Artificial intelligence may have crossed that line so quietly that the world is still debating whether the line exists.

When people hear "AGI", they picture a sentient machine: something that wakes up, has a look around, and starts demanding rights. That was never the definition. Artificial General Intelligence, in its original framing, meant a system that could handle intellectual tasks across domains at roughly human level. Not consciousness. Not feelings. Competence. Broad, reliable, general-purpose competence.

A system that drafts software, picks apart a legal contract, explains quantum mechanics, plans a marketing strategy, and composes a decent bit of music, all before tea, covers a remarkable amount of "general". The question is whether it covers enough.

The answer depends entirely on what you think "general" means: broad coverage of common domains, or the rarer human trick of mastering something entirely new from scratch. That distinction is the fault line running through this entire debate, and every objection to calling current AI "intelligent" falls on one side of it or the other.

The Agency Red Herring

The first objection comes up like clockwork: "Sure, it does tasks, but it has no agency. No independent goals. No autonomy."

Intelligence and agency aren't the same thing. Demanding that an AI set its own goals before we call it intelligent is like refusing to acknowledge a mathematician's genius because they can't run a marathon. You can be brilliant without being self-directed.

There is a subtler version of this critique worth taking seriously: current systems still struggle with reliable autonomous competence over long, open-ended tasks without human oversight. That's real. But whether AI systems are early in their trajectory or stuck at a plateau is an empirical question, not a foregone conclusion.

Your Brain Is Not a Single Model

The second objection sounds more technical: current AI relies on multiple models stitched together: one to generate, one to validate, one to orchestrate. Smoke and mirrors, the skeptics say.

They might want to take a closer look at their own brain.

You have roughly 500 million neurons in your gut alone, influencing decisions through chemical pathways your conscious mind has zero access to. Human intelligence was never a single, unified thing. It's a coalition of competing subsystems arguing with each other until something coherent enough to act on finally emerges. The feeling of being "one mind" is just a story your brain tells itself after the fact.

The analogy is imperfect: human subsystems are biologically integrated in ways that engineered multi-agent systems aren't, and engineered coalitions still hallucinate confidently in ways brains generally don't. But the principle survives: if "it needs multiple systems working together" disqualifies AI, then human intelligence never qualified either.

What matters is whether the functional outcomes are converging. And by most practical measures, they are, which raises an obvious follow-up: prove it.

What the Benchmarks Actually Show

In March 2026, the data arrived. It did not settle the argument. It sharpened it.

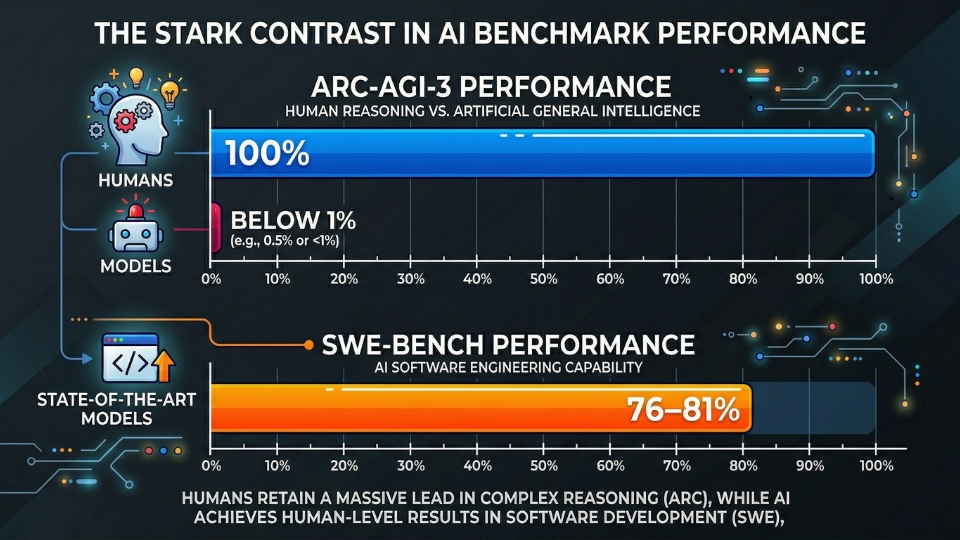

The ARC Prize Foundation released ARC-AGI-3, a benchmark designed to test the core of what researchers mean by "general" intelligence, not static pattern-matching. It tests interactive environments where agents must explore, infer goals, build world models, and plan, all without being told the objective.

Humans solved 100% of environments. Frontier models on the official leaderboard scored below 1%: Gemini 3.1 Pro Preview at 0.37%, others even lower.

That gap demands an explanation, not a dismissal.

François Chollet, the benchmark's creator, defines intelligence as skill-acquisition efficiency on novel tasks. By that measure, the ability to walk into something completely unfamiliar and figure it out from scratch with minimal data, current AI systems are still far behind us.

Even when researchers built sophisticated harnesses with tool calling and code execution, performance was "extremely bimodal": a harness that scored 97% on environments researchers had seen scored 0% on unfamiliar ones. The infrastructure was brittle, not robust.

This is the strongest argument against calling current systems AGI: they're brilliant within their training distribution and fragile outside it. A meaningful architectural limitation, not a semantic quibble.

But then you look at SWE-Bench. It tests the ability to take messy, real-world bug reports from GitHub and actually fix the code. On the curated Verified set, top models now resolve 79% of issues.

On SWE-Bench Pro, the harder, contamination-resistant version that tests multi-language tasks in unfamiliar codebases, that drops to around 46%.

These benchmarks measure different things, and it matters which one you consider definitional. ARC-AGI-3 tests adaptation to the genuinely novel. SWE-Bench tests useful work within known patterns.

The economy throws both kinds of problems at you, routine and unprecedented, and current AI handles the first category well. The second is where it breaks, and where human intelligence remains irreplaceable. That's the data. Now for what people make of it.

Why Smart People Still Say No

If the case for "AGI is here" were airtight, there wouldn't be a debate. So it's worth understanding the strongest counterarguments; not the strawmen, but the real ones.

Chollet's framework argues that what we're seeing isn't general intelligence but an unprecedented breadth of narrow competence. The model isn't "thinking generally"; it's pattern-matching across a massive training set that happens to cover most common domains. When you push it into truly novel territory, the illusion breaks. ARC-AGI-3 was built specifically to test this, and the results are clear: below 1%.

Many researchers point out that AI reasoning appears to be tethered to domain knowledge in ways human reasoning isn't. Humans can improvise in near-total ignorance, reason about something they know almost nothing about. Current models often can't. This suggests a fundamental architectural difference, not just a gap that more training data will close.

And then there's the reliability problem. In professional contexts, "right 80% of the time" isn't the same as "generally intelligent"; it's "occasionally dangerous". A human who's right 80% of the time still has meta-awareness of their uncertainty. They know when they're guessing. Current models often don't, and that gap between confidence and competence is where the real damage happens.

These are serious objections. They deserve weight. But they also assume we've been measuring progress on the right axis, and that assumption is worth questioning.

Nobody Improved the Candle

Electricity didn't arrive because someone built a better candle. It solved the same problem, light, heat, power, through a mechanism so different that the old framework couldn't even describe it.

The same thing is happening now.

Engineers running multi-agent workflows and shipping production code through AI harnesses will tell you something the benchmarks don't fully capture. The models didn't suddenly get dramatically smarter overnight. What changed was the toolkit. Reliable tool calling. Reliable multi-step execution. Reliable self-correction through reviewer models.

The raw intelligence curve was gradual. The capability curve had a cliff, and it came from engineering around the model, not from the model itself getting brighter.

We've been waiting for a brighter candle. What showed up was the lightbulb. And lightbulbs change economies.

The Jevons Paradox

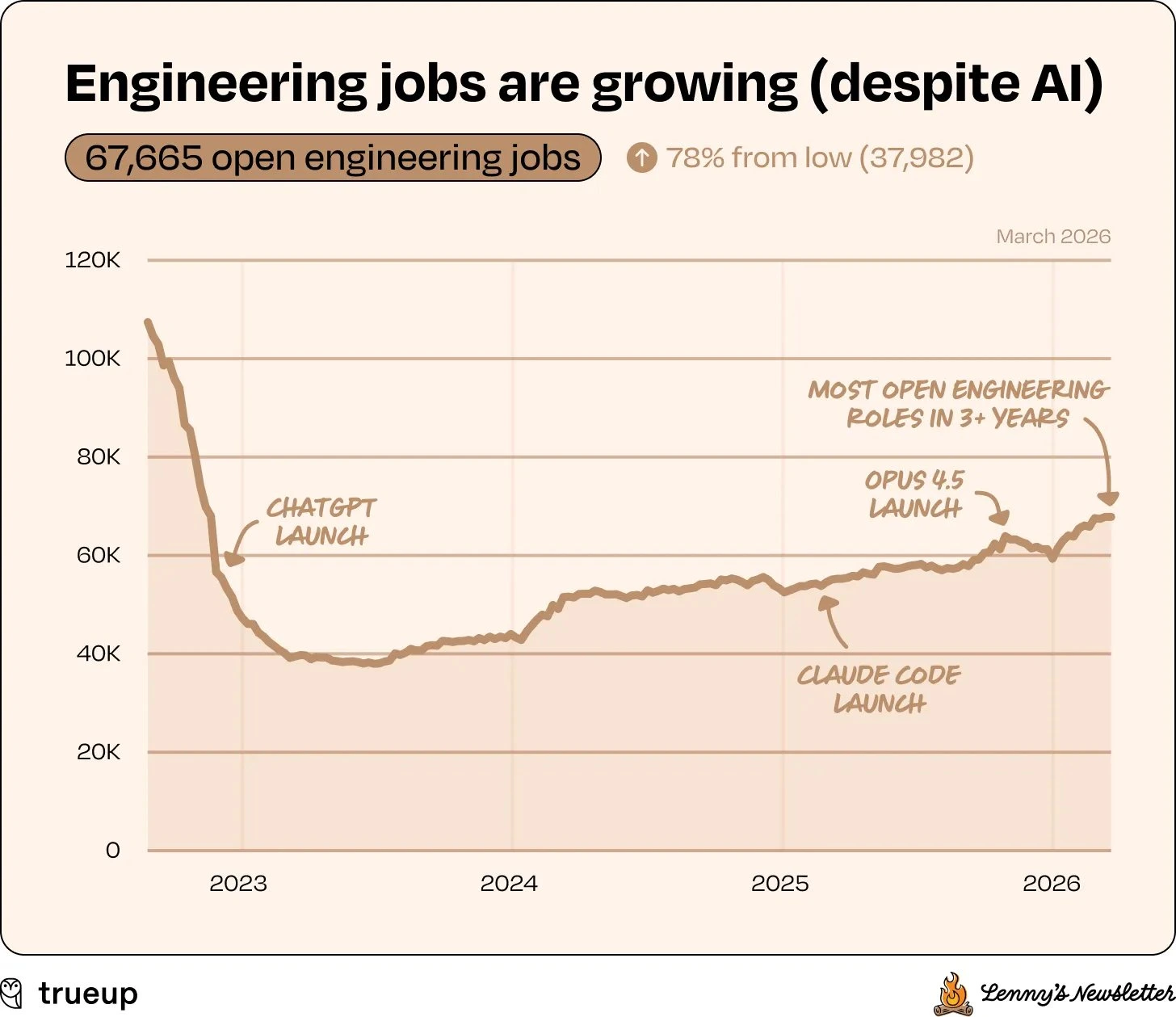

The economic consequences have not waited for the debate to conclude.

What we're witnessing is the Jevons Paradox in real time. Usually, when you make something more efficient, you use less of it. But with software, the opposite is happening. Because AI has made software incrementally cheaper to produce, companies are suddenly realizing they can afford projects they wouldn't have touched before: marketing teams automating workflows, healthcare firms accelerating research, small businesses building digital experiences that were previously out of reach.

The economic signal tells a split story. Stanford's Digital Economy Lab found that early-career workers aged 22–25 in AI-exposed occupations experienced roughly 13–16% relative employment declines since LLMs proliferated; clear canary signals in software, customer service, and similar fields. About 55,000 job cuts in 2025 were explicitly attributed to AI, with modeling estimates suggesting 200,000–300,000 actual displaced positions.

But the Dallas Federal Reserve found something more nuanced: employment is lagging in AI-exposed sectors, computer systems design down roughly 5%, while wages in that same sector have risen 16.7%, compared to 7.5% nationally. The market is rewarding tacit knowledge and experience.

AI isn't replacing all knowledge workers, it's bifurcating them. Entry-level roles involving well-defined tasks are shrinking. Senior roles requiring judgment and taste are becoming more valuable.

Meanwhile, Anthropic's own research found that actual AI adoption is a fraction of what AI tools are theoretically capable of performing. The gap between capability and deployment is wide, slowed by institutional inertia, trust deficits, and integration complexity. The shift has happened. The adoption just hasn't caught up.

So the macro picture is clear enough: jobs are splitting, not vanishing. But zoom in to the individual, and the question gets more personal: what does a career actually look like inside this shift?

From Navigator to Pilot

For decades, knowledge work has followed a factory logic. Break the work into tickets. Assign tickets to humans. Have a manager check the pile. It's a system Charlie Chaplin satirised a century ago in Modern Times, people reduced to cogs on an assembly line. We just moved the assembly line from the factory floor to JIRA boards and sprint backlogs.

If AI is clearing 79% of those tickets, the factory floor looks very empty.

Early aviation had a dedicated role called the navigator: someone who tracked the aircraft's position manually using star charts, an accelerometer, and a pencil. It was skilled, essential work. When GPS arrived, the role vanished. But the people didn't disappear from the industry. Many of them became pilots. The job shifted from "doing the calculation" to "understanding the system and making the decisions".

The Dallas Fed data suggests the same pattern is emerging now. AI substitutes for entry-level workers performing codifiable tasks, but complements experienced workers whose value lies in tacit knowledge, the kind you can only build through years of practice.

You're no longer hired to address tickets. You're hired to own outcomes.

But there's a serious caveat, and it's one the optimists tend to skip. The current model of career progression, take an entry-level job, do codifiable tasks, slowly learn tacit knowledge, breaks down when AI handles the codifiable layer. If juniors never do the grunt work, how do they develop the judgment that makes them valuable seniors?

This isn't a solved problem. It requires rethinking education, apprenticeship, and professional development from scratch. We need new ladders to replace the ones AI is removing, and we haven't built them yet.

And career ladders aren't the only thing at stake when the machine gets it wrong.

Where 80% Can Kill You

If an AI writes code and introduces a bug, your test suite catches it, you iterate, you ship. These are domains that are inherently resilient to imperfection; domains where "good enough, then improve" is the accepted workflow.

But what about an AI computing drug interactions for a patient with six prescriptions? An autopilot system making a split-second judgment call at 40,000 feet? In those worlds, 80% accuracy isn't a passing grade; it's a catastrophe. A 20% error rate in aviation means roughly one in five flights has a potentially fatal miscalculation. Nobody boards that plane.

AI has arguably crossed a usefulness threshold for trial-and-error-tolerant domains: software, content, analysis, design, research. But for life-critical systems, medicine, aviation, infrastructure, we aren't anywhere near the reliability required. The gap between "generally capable" and "trustworthy enough to bet a life on" is measured in blood.

The generalization problem makes this worse. The same ARC-AGI-3 results that show near-zero performance on novel environments should give us pause about any system operating in domains where unexpected situations are the norm and the cost of failure is catastrophic.

So What Do We Call It?

If you define AGI as the ability to do useful intellectual work across the board, then it has arrived. It's sitting in your browser, it's checking your code, and it's fuelling a massive expansion of the digital economy.

If you define AGI as that human-like adaptability, the ability to face something completely unfamiliar and master it from scratch, then ARC-AGI-3 quantifies how far we still have to go: below 1%, against humans at 100%.

The disagreement is substantive, about what intelligence fundamentally is, and only one side of it is already reshaping people's careers. The practical impact has arrived even if the theoretical threshold hasn't.

What's harder to deny is that something fundamental has shifted. Not a clean threshold, but a slow accumulation of engineering improvements that have made AI systems genuinely capable partners in knowledge work. Whether we call that AGI, proto-AGI, or the most powerful narrow tools in history matters less than whether we're preparing for what comes next.

We wake up, the kettle boils, and we sit down to work with a partner we don't quite understand yet. It isn't the end of the world. It's just a very different sort of Thursday.

Comments